The Breker Trekker Adnan Hamid, CEO of Breker

Adnan Hamid is the founder CEO of Breker and the inventor of its core technology. Under his leadership, Breker has come to be a market leader in functional verification technologies for complex systems-on-chips (SoCs), and Portable Stimulus in particular. The Breker expertise in the automation of … More » Methodology ConvergenceAugust 8th, 2019 by Adnan Hamid, CEO of Breker

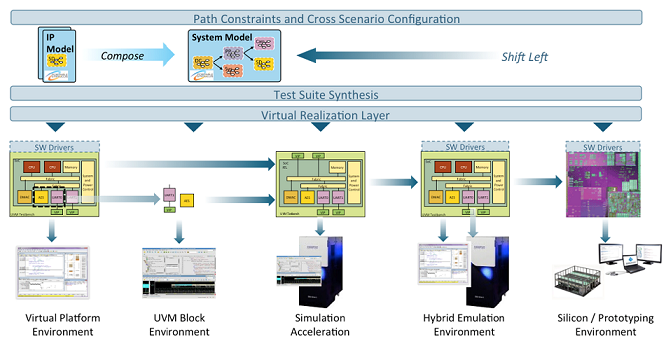

It is unfortunate that design and verification methodologies have often been out of sync with each other, and increasingly so over the past 20 years. The design methodology change that caused one particular divergence was the introduction of design Intellectual Property (IP). IP meant that systems were no longer designed and built in a pseudo top-down manner, but contemplated at a higher level and constructed in a bottom up, ‘lego-like’ manner by choosing appropriate blocks that could implement the necessary functions. In many ways, this construction approach also led us down the path of the SoC architectures with an array of processing elements tied together with busses and memory we see today. And, no surprise to anyone because it mimicked the architecture of discrete compute systems with everything moving onto a single piece of silicon. This approach also provided a natural interface and encapsulation for many IP blocks. While this transformation was happening, verification was migrating toward constrained random test pattern generation. It appeared to fit into this IP strategy because each block had a fairly regular interface – the bus – through which low-level control could be exerted on the functionality. It also removed the processor from the equation, something that slowed down a simulator. This was perhaps a false saving because hardware/software co-verification became available soon after that. Yet, it was still argued that because compartmentalized functionality in an IP block had to be verified exhaustively, you did not want constraints on the bus that would be imposed by a processor and software. This strategy was somewhat flawed. System-level verification problems quickly started to become more complex, especially when the SoC progressed from being a simple, single threaded engine controlling a bunch of accelerators. When multiple processors became commonplace within an SoC, interactions between processors and blocks was leading to problems. Some were functional, some performance related and others about power consumption and thermal management of the chip. The verification methodology had another flaw. Models created for the verification of a single block could not be easily integrated together because encapsulation had not been properly thought through on the verification side. Band-Aids were placed on the constrained random methodology to be able to address some of these issues, but the fundamental methodology could not be changed to properly address system-level integration verification. This problem has been the focus of Breker and we address it using our test suite synthesis technology for more than a decade. In most cases, the processor is intimately involved with verification and many of our users verify their systems from the inside-out, rather than the outside-in methodology of the past. It was our success with this methodology that led to our forming and donating technology for the creation of Accellera's Portable Stimulus Standard (PSS). Many of the participants in that standardization effort came from the previous constrained random era. It should come as no surprise that the standard does not fully address capabilities necessary for a successful system-level verification methodology. One problem is that while block-level verification should concentrate on finding all design bugs, that is not the goal at the system level. Here the goal is to show that necessary functionality works. While graphs can define a blocks’ functionality, the methodology does not fully work if you want to support an IP reuse model. If each block of IP comes with its corresponding PSS verification graph, that graph represents all functions that the block can perform. A higher-level graph becomes the combination of all the graphs for each of the IP blocks. But not all functions from all blocks are required or wanted in the system. However, the top-level graph does not know that and the whole point of being able to import and join graphs is to save having to rewrite models. The standard ignores how to remove capabilities from a graph without having to rewrite it. Users of Breker's tool do not have to live with that constraint. Consider a hypothetical system with a camera, an encryption engine a jpeg converter and an SSD interface. While the complete graph would enable an encrypted jpeg of an image taken from the camera to be stored n the SSD, a path constraint may say that this is not possible and no test would be created. It would create a test storing a jpeg of the image to the SSD or an encrypted image. We call this concept a path constraint (together with corresponding path coverage) and it overlays a system-level graph. It can be used to show which paths are not important and which should be ignored when constructing tests. It is a similar concept to the way that a UPF file overlays a SystemVerilog model of the design. This constraint overlay means that irrelevant tests will not be created, and that the resulting graph can be used to show things like meaningful coverage. With this type of capability, we can add, remove and configure scenario segments from a graph without rewriting. Product families can define the list of available IP and capabilities that do not form part of each product variant. Unlike regular PSS constraints, these path constraints operate across the entire graph, greatly adding to their flexibility and power. We added this is value on top of our test synthesis capabilities because it the type of capability our users have told us they need. In this era of ‘shift-left,’ when engineering teams strive to get as much done early in the process, this approach provides major efficiencies. A team can get a system testbench running on a virtual platform at the beginning and provide sections to the block design groups for those SoC parts that must be built from scratch. They then configure tests for different verification scenarios all the way through the entire flow, seamlessly moving from simulation to emulation, if appropriate. A properly thought-out, system-level constraint and coverage solution built into the synthesis solver technology, acting as a layer above the standard model so as not to interfere with the PSS code, becomes essential for building a cohesive verification intent model. The alternative is a fragmented string of scenarios with little relation to each other. Our users proved this is a powerful way to get real efficiencies when using PSS. This capability is available within Breker’s test suite synthesis solution, but may be lacking from tools that adhere only to the basic Portable Stimulus Standard. Together we can do this. Tags: Accellera, Breker Verification Systems, methodology convergence, portable stimulus, Portable Stimulus Standard, PSS, synthesis technology, SystemVerilog, test suite synthesis, testbench, UPF, uvm, verification Category: Knowledge Depot Warning: Undefined variable $user_ID in /www/www10/htdocs/blogs/wp-content/themes/ibs_default/comments.php on line 83 You must be logged in to post a comment. |

|

|

|||||

|

|

|||||

|

|||||